Latest News

- AIR kick-out summary

- Embodied Cognition for automated driving – AIR members guests speakers at SAFER-seminar

- Istituto Italiano di Tecnologia visited AIR

- An Engineer with a Passion for Health

- AIR workshop: Autonomous systems in industrial environments

- AIR-Consortia on tour

- AIR-Consortia on tour

- ICMR-Conference

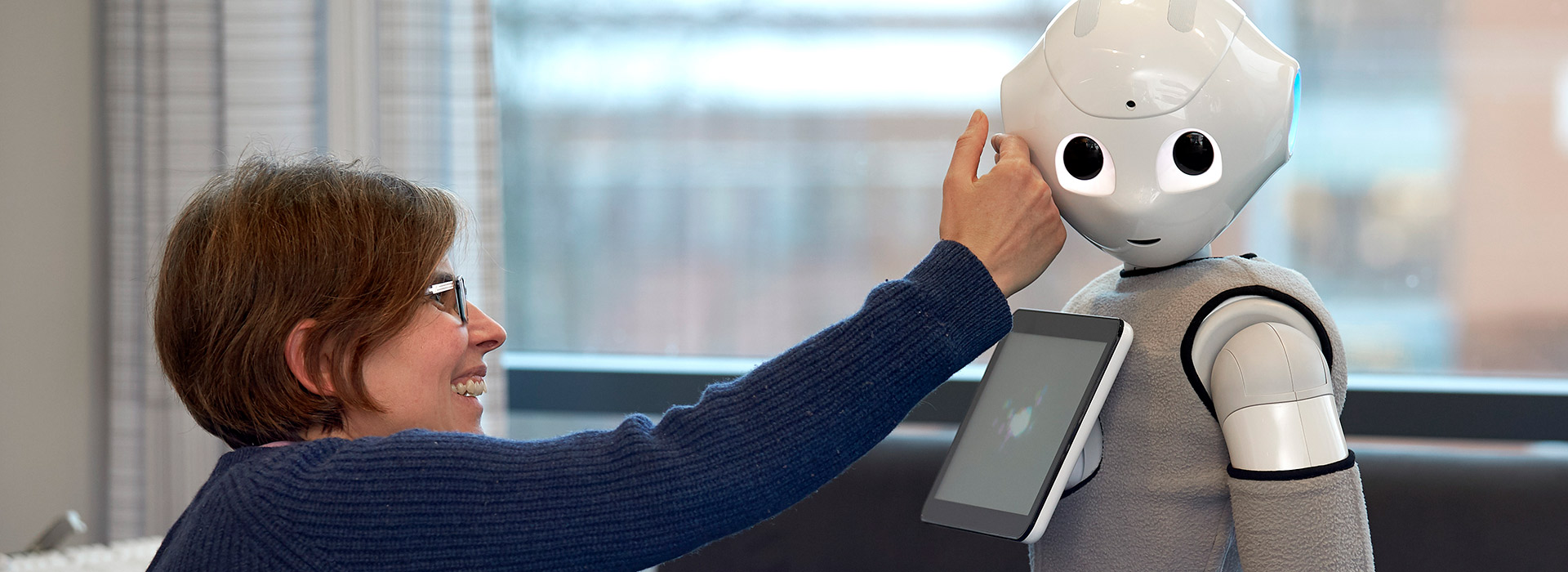

- World Alzheimer’s Day – Halmstad researchers are developing solutions for dementia patients

- The researcher who wants to teach machines to see